FROM STAGNATION TO

Unlocking your potential & skyrocketing your performance. engagement.

Technology

By, Curtis Isozaki, M.A., CF-LSP

Whenever new technology emerges in the world and society, there is often a reality of misuse across organizations that can create a greater equity gap due to challenges with accessibility and resources, impacting the entrepreneurial gap. There is a seismic societal shift worldwide amid the age of AI, necessitating the ethical envisioning of the societal impact of artificial intelligence and related tools like Replit. Without ethical guidelines and safeguards, AI risks undermining transparency and autonomy, impacting vulnerable populations (Holmes et al. 2022). However, utilizing AI will become a competency, like using email, web search, writing, and website application development, that will be measured by the ability to leverage and automate these tools. There are risks to narrowing professional competency and identity to efficiency rather than continuing to engage in meaningful dimensions of work: task integrity, skill cultivation and use, task significance, autonomy, and belongingness (Bankins & Formosa, 2023).

Ultimately, as these articles have proven with Replit, AI is influencing how we analyze data, design solutions, manage workflows, make decisions, and more (Parker & Grote, 2022). AI has become a strategic partner in both my professional and personal life, allowing me to spend more time thinking critically and creatively rather than manually organizing strategies, systems, and frameworks.

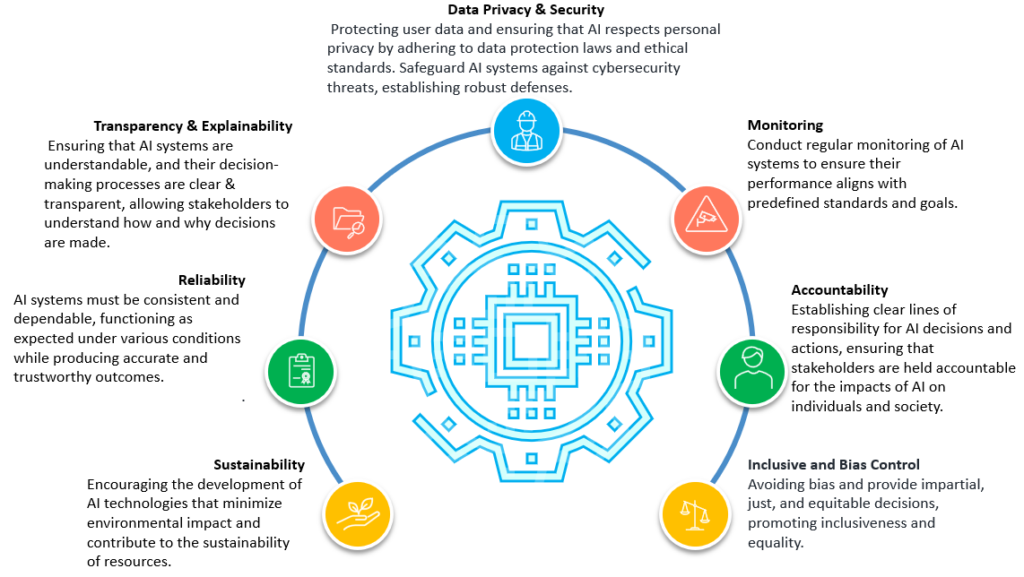

Photo Credit: Blackstraw AI. (n.d.). AI governance: The path to responsible and secure AI development. Blackstraw. Retrieved from https://blackstraw.ai/blog/ai-governance-the-path-to-responsible-and-secure-ai-development/

As application development becomes accessible through Replit, security and governance must be strengthened to enable responsible application development. Replit incorporates foundational security measures, including encrypted HTTPS, containerized isolation, collaboration permission controls, and more. Containerization ensures projects run in Replit’s computing environment, reducing cross-project vulnerabilities by providing standardized security for AI application development. There is required verification for development, including validation, secure password compliance, API key protection, and database access permissions.

According to the National Institute of Standards and Technology (2023), AI systems must be evaluated using structured risk management frameworks to ensure transparency, accountability, and ongoing monitoring. From a governance perspective, AI raises questions about intellectual property, data bias, data privacy, and more, where AI-generated code could draw patterns from open-source repositories, prompting greater consideration of attribution in language learning models. Although there are risks, Replit has expanded its access to technological agency through ethical oversight, security, and development practices that support innovation without compromising ethical trust in human agency in AI-assisted development. Ultimately, Replit embodies the opportunity and responsibility to compress the distance between idea and application implementation, elevating the standards for digital stewardship and scalability for entrepreneurial success. Envisioning the societal impact of Replit requires ongoing accountability of security and governance for responsible application development in our world today.

References:

Bankins, S., & Formosa, P. (2023). The Ethical Implications of Artificial Intelligence (AI) For Meaningful Work. Journal of Business Ethics, 185(4), 725–740.

Blackstraw AI. (n.d.). AI governance: The path to responsible and secure AI development. Blackstraw. Retrieved from https://blackstraw.ai/blog/ai-governance-the-path-to-responsible-and-secure-ai-development/

Holmes, W., Persson, J., Chounta, I.-A., Wasson, B., & Dimitrova, V. (2022). Artificial intelligence and education: A critical view through the lens of human rights, democracy and the rule of law (pp. 5–32). Council of Europe.

National Institute of Standards and Technology. (2023). Artificial Intelligence risk management framework (AI RMF 1.0) (NIST AI 100-1). U.S. Department of Commerce.

Parker, S. K., & Grote, G. (2022). Automation, algorithms, and beyond: Why work design matters more than ever in a digital world. Applied Psychology, 71(4), 1171–1204.

February 15, 2026

FROM STAGNATION TO